The Context Inversion

Ever since I started building with FastMCP back in April 2025, I've been on a journey to figure out how I can give my AIs more context. More memory. More session awareness. More. Months went by building MCP servers and tools, all in pursuit of that. A checkpoint system by November. A Second Mind taking shape by January. Nearly a year of work, all pointed in the same direction.

Then February 2026 hit. I was restructuring what I had built into an application, and something unexpected surfaced. I haven't been building a context engine for my AIs. I've been building one for me.

There's a quiet inversion happening that I think deserves more attention. All this infrastructure we're building to give AI more context? It can point the other way.

The Memory Arms Race

We've all seen it. The clips, the article titles, the podcasts. AI is either the second coming or completely overhyped because it "doesn't even work." And everything in between. For the last two years, the industry has been pushing for more "intelligent" AIs that can better mimic a real person, or better yet, surpass human thinking. A big part of that work is the ability to store and then dynamically retrieve "memories."

It's not just one company chasing this. Mem0, Claude's memory, ChatGPT's memory, OpenClaw. All converging on persistent AI memory from different angles, all at once.

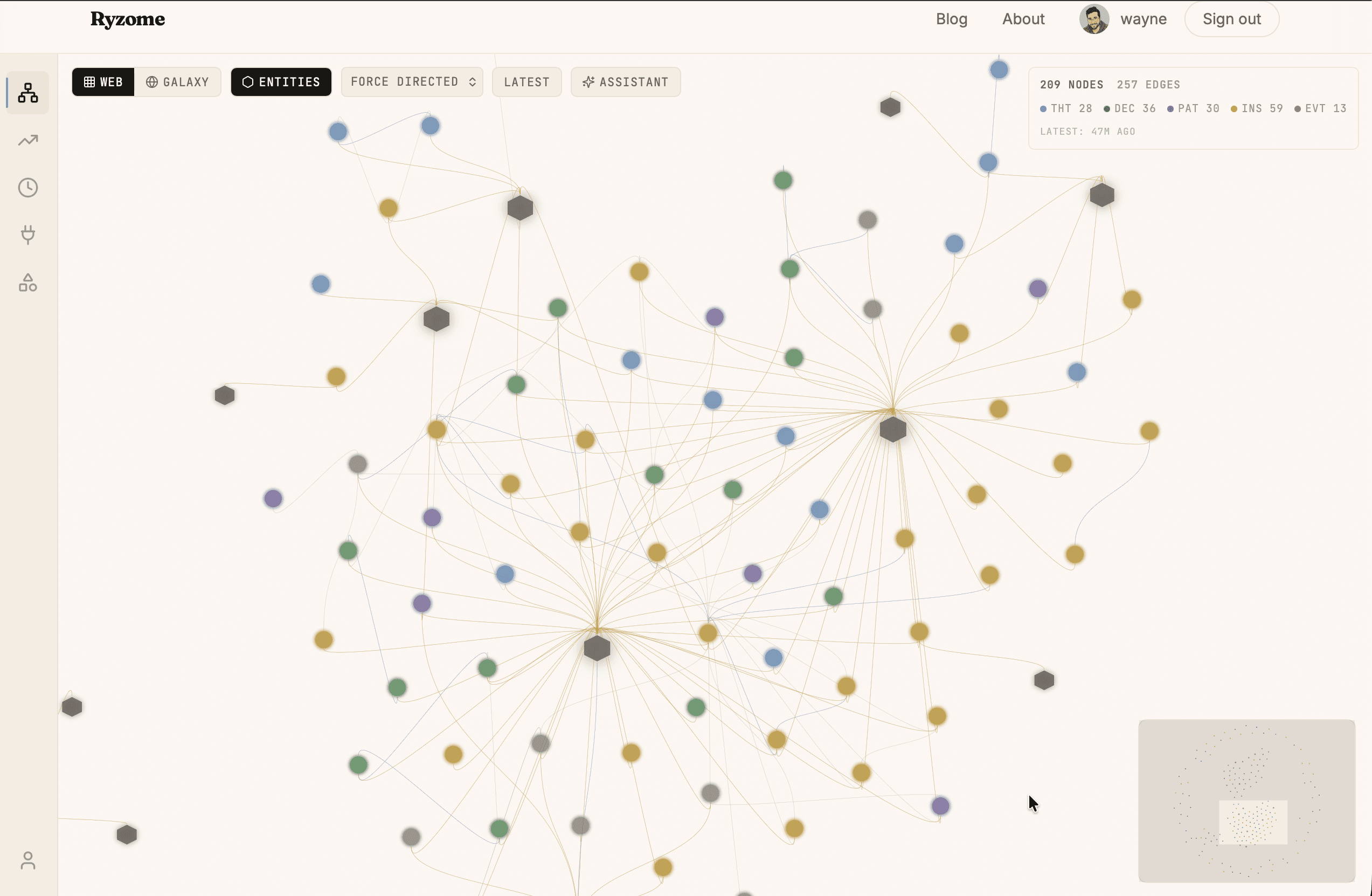

The reason is simple. We want AI conversations that last. Not a single session that evaporates, but interactions that stretch across months and years, accumulating context the way a colleague does. No platform does this well out of the box yet. So I built my own. Checkpoints for continuity. A knowledge graph for meaning. One remembers the thread. The other remembers why the thread mattered.

Here's the problem that forced my hand. AI tools that run long sessions eventually hit a wall. The conversation gets too large, so the system compresses it to keep going. Every compression loses something. The AI picks up the thread afterward, but it drifts. It's like handing someone your notes from a meeting instead of having them sit through the whole thing. The gist survives. The nuance doesn't. And once the session has been compressed enough times, it's better to start fresh. Checkpoints solved this for me. I snapshot the state before things degrade, and pick up in a new session with almost no friction.

I didn't set out to have an opinion about persistent AI memory. I just kept building until I was living inside it.

The Thought Problem

Checkpoints solved the forgetting problem. But only half of it. Sessions stay intact, context carries forward. What they can't do is tell you which moments actually mattered. That's a different kind of memory. Not the kind that replays events in order, but the kind that pulls a single insight from three weeks ago and connects it to something you're thinking about right now. I built a knowledge graph for that. And I built it because connecting those dots on my own has never been something I could do.

I've been notoriously bad at capturing my thoughts, let alone connecting them. I'll have a compelling insight one morning and spend the next hour turning it over in my mind before the river of life carries me away. Then maybe a month later, something happens that reminds me of that thought. And as hard as I try, I can't recover the feeling I had when it was so alive. I chastise myself that I should write it down, but I won't.

And if I do throw a note into my phone, it's gone until I stumble across it four years later when I'm looking for that documented cookie recipe at Christmastime.

Some people are good at holding onto those thoughts and acting on them without ever writing anything down. Others are more disciplined, with manual systems they actually maintain. I've always struggled in that regard. The friction was always too much. I'd get sidetracked. Distracted. I'd promise myself I'd come back to it, but I never did.

Seagulls and Rope

There's a scene in "James and the Giant Peach" that has stayed with me since I was a kid. The peach is floating in the ocean, about to be devoured by a shark. James decides to use spider webbing to lasso seagulls and tie them to the peach, hoping that if he catches enough, the peach will lift off before they're swallowed whole. It works, of course, and off they go.

I've always thought about ideas the same way. I'm standing on a cliff with a handful of rope, watching thoughts float by like seagulls over open water. If I catch one, I can pull it close and hold it. But only as long as I keep the rope tight. I only have two hands. Eventually I have to let go, and once I do, the thought never comes back whole.

Something to Tie the Rope To

Building an MCP server for capturing thoughts, decisions, patterns, and insights is like finally having something to tie the rope to. The knowledge graph lets me release my grip on one thought because the next thought, the evolution of it, is already connected. The graph holds the relationship. The AI can traverse it instantly.

What I've realized in this journey is that by working to give AIs better memory, I've instead given myself access to what was once a fleeting thought. I have a catalog of thoughts I can build on and reshape. I can pick up patterns I noticed weeks ago. I can focus on something that keeps circling back. I can think strategically about what I care about, both the things I know and the things I'm only starting to recognize.

What I didn't expect is that it makes the evolution visible. I can trace how a passing thought became a pattern, and how that pattern became a decision. My own cognitive trail, laid out in front of me.

Never Lose the Thread

This pattern extends well beyond ideation. Journaling. Sales engineering. Writing a novel. Blogging. Anything where continuity matters. Imagine picking up a conversation that has spanned months with the clarity of the moment it began.

And the friction is almost zero. Because this is MCP-driven, the tools exist natively inside whatever AI agent you're working with. They can be called automatically by the agent as you work, or manually when you want to deliberately distill something. There's no separate app to open, no workflow to remember. The thought capture is embedded in the conversation itself, which means the very act of thinking with your AI becomes the act of remembering.

Because everyone thinks differently, the shape of that graph looks different for everyone. A PhD researcher structures their thinking around hypotheses. A politician tracks constituent engagement. A novelist maps character arcs across timelines. Now imagine those cognitive styles becoming shareable. Templates you can publish for others who think the way you do. That quiet inner world most of us never share becomes visible, not just to ourselves, but to a collective, should we choose.

Sometimes a thought is just a thought. But given enough time and input, that thought might have been a signal. It might have been hinting at something larger. The beginning of a pattern. The end of one. These stories surround us, but we rarely see them whole.

I set out to give my AI more context. I ended up giving myself a way to finally hold onto the things that matter. I think personal cognition tools are going to reshape how we collaborate and communicate. And I think the quiet inversion at the center of all this, where the infrastructure you build for the machine turns out to be for you, is only the beginning.